GSA SER Global Site List

Understanding the GSA SER Global Site List

When building backlinks at scale, efficiency and freshness determine how fast your campaigns gain traction. The GSA SER global site list is a curated database of target URLs specifically formatted for GSA Search Engine Ranker. Instead of scraping search engines for hours, users load verified, ready-to-post platforms—saving time and lifting success rates dramatically. This list spans multiple engines, categories, and platforms, making it one of the most important assets for serious link builders.

What Exactly Is a Global Site List in GSA SER?

Within GSA Search Engine Ranker, a site list is a plain text file containing URLs where the software will attempt to register and post backlinks. A GSA SER global site list takes that concept further. It isn't limited to one niche or one language; it aggregates working targets from various sources—forum profiles, blog comments, social bookmarks, web 2.0 properties, article directories, and wikis—across the entire globe. The list is cleaned, deduplicated, and verified for captcha bypass readiness, platform identification, and POST/GET compatibility.

Key Components of a Quality Global Site List

- Engine Tags: Each URL is paired with an engine identifier like "WordPress," "vBulletin," or "MediaWiki" to help GSA SER select the right submission method.

- Contextual Metadata: Some advanced lists include category hints, language codes, or dummy credentials for guestbook and forum profiles.

- Footprint-Free URLs: Pure domain URLs or deep pages that avoid search-engine footprints, reducing the chance of being ignored during platform detection.

- Verification Status: Timestamps or last-checked flags that indicate the link is still live and accepting submissions.

Why a Global Site List Outperforms Default Scraping

Relying solely on GSA SER's built-in search engine harvesting can work, but it introduces several pain points. A dedicated GSA SER global site list provides immediate volume without proxy consumption on search queries. It also solves the problem of regional inconsistency—you receive targets that accept English content, multilingual spins, or niche-specific placements, all pre-sorted. Because the list is maintained by experienced users, dead URLs are pruned regularly, keeping your verified-to-failed ratio high.

Advantages Over Freshly Scraped Targets

- Zero search engine footprint: No burned proxies or captcha costs spent on harvesting. Your proxies are reserved purely for account creation and posting.

- Immediate campaign launch: Import the list and start submitting within seconds, bypassing hours of scraping and platform detection.

- Higher platform diversity: Curators often inject rare engines and non-English CMS targets that standard search scraping misses.

- Clean data format: URLs are delivered in GSA SER’s native import format, eliminating manual cleanup of invalid characters or redirect chains.

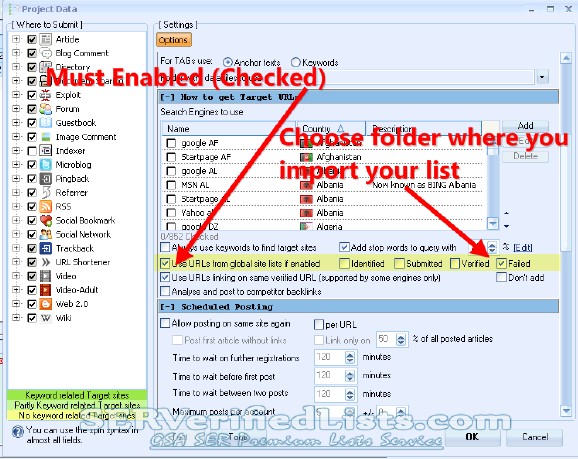

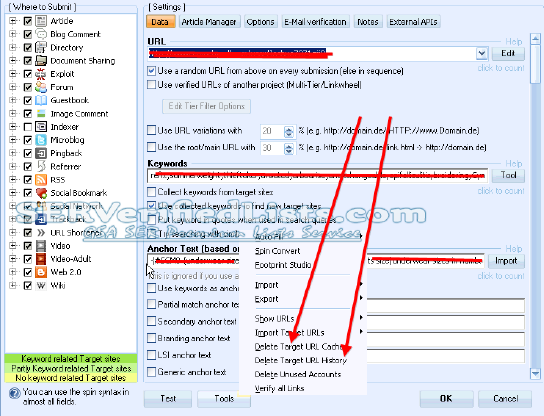

How to Import and Use a Global Site List

Loading a GSA SER global site list into your project is straightforward. Open GSA Search Engine Ranker, navigate to the project you want to enhance, and click the “Import target URLs†option. Select the text file that contains your global site list—ensure the encoding is UTF-8 if you work with international domains. Go to the project’s options, then the “Sites†tab, and configure the import mode to “Add to existing†or “Replace†based on your needs.

Best Practices for Integration

- Merge, don't overwrite: If you also run search engine scraping alongside the list, use the merge function to keep both sources active.

- Engine matching: Verify that GSA SER has the correct engine .ini files installed. Global lists often contain platforms like DLE (DataLife Engine) or simplified Chinese CMS that need custom engines.

- Adjust posting limits: Because a global list may contain thousands of live URLs, set realistic per-day submission caps to avoid wasting bandwidth or looking spammy.

- Schedule re-checks: Activate “Verify before posting†for each link or schedule the list for an internal re-verification run every few weeks.

Building vs. Buying a GSA SER Global Site List

Some users attempt to compile their own GSA SER global site list by running heavy scraping campaigns across hundreds of search queries. This approach is possible but time-intensive. You'll need unlimited proxies, significant captcha solving credits, and a deep understanding of footprint strings for each engine. Even then, the verification pass will discard up to 60% of here the scraped URLs. Buying or downloading a curated list from a reputable community often reduces wasted resources and jumpstarts indexing.

What to Look for in a Pre-Made List

- Freshness guarantee: The list should be updated at least monthly, with a clear last-verified date.

- Engine breakdown: Transparent stats showing how many URLs belong to contextual platforms, wikis, bookmarks, and guestbooks.

- No duplicate noise: A clean list free of redirects, 404s, and parked domains.

- Multi-language support: Useful if your tiered link-building strategy spans non-English anchor distributions.

Maintaining Link Health and Diversity

A GSA SER global site list is not a set-and-forget asset. Websites go offline, change CMS, or add aggressive JavaScript challenges. Using an outdated list leads to ballooning “failed to identify†entries and wasted posting attempts. Pair the list with GSA SER’s “Auto Verifier†tool, which continuously tests listed URLs and removes dead ones. Additionally, rotate lists in cycles—keep a primary global list for high-volume tiers, and a smaller, hand-verified list for money-site tier 1 campaigns.

Common Mistakes to Avoid

- Ignoring platform engine updates: Outdated engine definitions cause mass failures even on live sites. Synchronize your GSA SER engines folder before importing a new list.

- Using a single list for every project: Different niches need different link types. A generic global list works for tier 2 and 3, but your direct tier 1 might need a filtered subset of high-authority sites.

- Overloading with unverified links: Always perform a quick “test†run on a small portion to gauge success rates before scaling.

- Neglecting proxy diversity: A massive list generates many simultaneous connections. Use enough residential or rotating proxies to prevent IP bans.

Frequently Asked Questions

What is the difference between a global site list and a verified list?

A GSA SER global site list typically includes URLs that have been identified as working at some point and tagged with the correct engine. A verified list goes a step further: each URL is tested right before delivery to confirm registration or posting is possible. Global lists offer scale; verified lists offer higher instant success rates but may contain fewer entries.

Can I use a global site list without any additional engines?

Yes, but the results will be limited to the engines that come pre-installed with GSA SER. Global lists often rely on community-made engine files for platforms like Joomla K2, Drupal, and obscure social networks. Without those, you'll miss a significant portion of targets.

How often should I replace my global site list?

Depends on your campaign volume. Heavy users replace or refresh their GSA SER global site list every two to four weeks. Light users can re-verify an existing list and extend its life by removing failed entries manually.

Does a global site list work for tier 1 link building?

It can, but you must filter strictly. Most white-hat tier 1 strategies involve editorial blogs and niche-specific forums that a generic global list won't cover. Use a curated, high-quality subset and apply heavier content spin and manual review.

Are free global site lists safe to use?

Free lists circulate widely and can contain spam traps, honeypot URLs, or links to de-indexed domains. They also tend to be stale. If you use a free list, run it through GSA SER’s verification first and never point it directly at a money site without a tiered buffer.

How do I create my own global site list from scratch?

Start by configuring GSA SER’s platform search with broad footprints like “powered by wordpress†and “post a comment.†Let the software scrape and identify for several days using a massive proxy pool. Export the identified targets, deduplicate them, and enrich them with engine tags. Finally, run a manual verification pass as a separate project before reusing the clean export.